This post is based on a talk I delivered at the third PKM Summit in Utrecht on March 20, 2026.

I’m pleased to talk PKM in a city where Erasmus of Rotterdam — one of my intellectual heroes — spent some of his early years. This presentation has two purposes. First, I’ll give you a different frame to think about personal knowledge management. Then, I’ll share ways to use AI effectively in that context. I expect Erasmus would’ve been tickled!

Last year, Lou Rosenfeld sent me an email asking if I’d seen a post by Joan Westenberg titled I Deleted My Second Brain. Back in 2024, Lou published my book Duly Noted, which shows you why and how to build a PKM system. Westenberg’s post argued against doing just that. Naturally, Lou wondered what I thought.

I won’t recap the whole post. The TL;DR: the author built an elaborate PKM system. After some time, the system wasn’t producing the expected results, so they got rid of it. Rather than summarize further, I’ll cite a couple of representative passages:

In trying to remember everything, I outsourced the act of reflection. I didn’t revisit ideas. I didn’t interrogate them. I filed them away and trusted the structure. But a structure is not thinking. A tag is not an insight. And an idea not re-encountered might as well have never been had.

And:

When I first started using PKM tools, I believed I was solving a problem of forgetting. Later, I believed I was solving a problem of integration. Eventually, I realized I had created a new problem: deferral. The more my system grew, the more I deferred the work of thought to some future self who would sort, tag, distill, and extract the gold.

That self never arrived.

It’s a good and nuanced post, and you should read it. That said, it doesn’t cover new ground. Even when I was writing the book in 2022–23, there were already posts with titles like Note-taking Became a Full-time Job, so I Stopped, Personal Knowledge Management Is Exhausting, and — my favorite! — Personal Knowledge Management Is Bullshit.

They all trace a similar arc: lured by visions of increased productivity, the author builds an elaborate PKM system. They spend lots of time capturing notes, tagging, linking, organizing, etc. But the expected results never come. Eventually, the author decides the effort is futile, and gives up. Deleting the system brings a sense of relief and renewed agency, which they feel compelled to share.

I’m not here to diss these people. PKMs aren’t for everyone. If it’s not for you, the sooner you stop, the better — perhaps. But I also believe mindset influences the value you get from these systems. And unfortunately, the most common framing for PKMs sets the wrong mindset. It’s the metaphor in the title of Westenberg’s post: second brain.

Problems with the “second brain” metaphor

In Metaphors We Live By, Lakoff and Johnson explain that metaphors don’t just reveal how we talk about things; they also reveal and inform how we think about things, deep down. Which is to say, metaphors matter. And I’ve come to believe the “second brain” metaphor leads to bad thinking about PKMs.

Before proceeding, I’ll say upfront that I admire Tiago Forte’s work. His PARA taxonomy has influenced me. And on the upside, thinking of PKMs as a “second brain” has brought lots of people into the fold.

That said, I think the “second brain” metaphor has three problems:

- It implies delegating cognition. The promised outcome is a prosthetic mind. That is, the system will relieve you of thinking and (especially!) long-term recall. (Westenberg: “I believed I was solving a problem of forgetting.”)

- It sets expectations PKMs can’t meet. This isn’t a promise current PKMs — even with AI — can deliver. The system won’t “extract the gold,” at least not for a long time and after a lot of work on your part.

- These are bad expectations to begin with. Even if PKMs could do this, you shouldn’t want this. If you want to think better, your goal shouldn’t be to delegate your thinking: It should be enabling your first brain to work better.

Enter the knowledge garden

A more fruitful metaphor for PKMs is that of a garden. Many of us already talk about our PKM systems as “places” where we do focused work. This is why I like the garden metaphor: it’s about building a context for you to think in rather than a thing that thinks for you. But it goes beyond that.

on [Unsplash](https://unsplash.com/photos/single-perspective-of-pathway-leading-to-house-qYwyRF9u-uo?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)](/assets/images/2026/03/garden-arches.jpg)

Photo by Veronica Reverse on Unsplash

There are different kinds of gardens for different purposes. Some are for pleasure, while others are for growing food. Some are industrial; others artisanal. What they all have in common: things grow there. And it doesn’t happen overnight, but after much toil in the soil. For a garden to fulfill its purpose — whatever it might be — it must be stewarded over a long time.

Also, a garden’s structure can’t be rigidly top-down. While some structure is needed, the place’s form emerges over time as it meets real-world needs. Thinking about PKM as a productivity hack leads to overemphasizing upfront structures and workflows at the expense of the more patient approach required by organic processes.

Finally, for many gardeners, the fruit is only part of their garden’s value. Gardening is pleasurable per se. It’s not something they do just because they want to eat. After tall, it’s cheaper and easier to go to the supermarket. Instead, they garden because they find it fulfilling.

Many a garden’s ulterior purpose is providing the kind of groundedness that comes from putting your hand in the soil and nurturing living things. The fruit that comes from such a place tastes better than the one you buy from the store — even if (or perhaps because) you’ve put a lot of work into it.

A garden provides solace and recreation — the opposite of the anxiety that overhangs systems built as productivity hacks. My PKM system provides solace and recreation. So I call it my “knowledge garden,” riffing on the popular digital garden metaphor and Andy Matuschak’s evergreen notes, among others.

I approach my knowledge garden with Field Notes’s tagline in mind: “I’m not writing it down to remember it later, I’m writing it down to remember it now.” I don’t keep a PKM to remember things later, but because writing, structuring, and connecting ideas is how I think. That the words are there for recall later is a bonus, not the main attraction. Clearer thinking is the “gold,” the notes merely record it happened.

But if the point is creating a place for your first brain to work better, that raises an increasingly pressing question: what role should AI — which is being explicitly framed as a prosthetic mind — play there?

AI as amanuensis

To explain how to use AI in a knowledge garden, I’ll refer back to what I wrote in Duly Noted. While I wrote the bulk of the book before ChatGPT came out, the final chapter covers this topic. I’ll offer a quick summary here, but I’ve shared a longer post should you want to dive deeper.

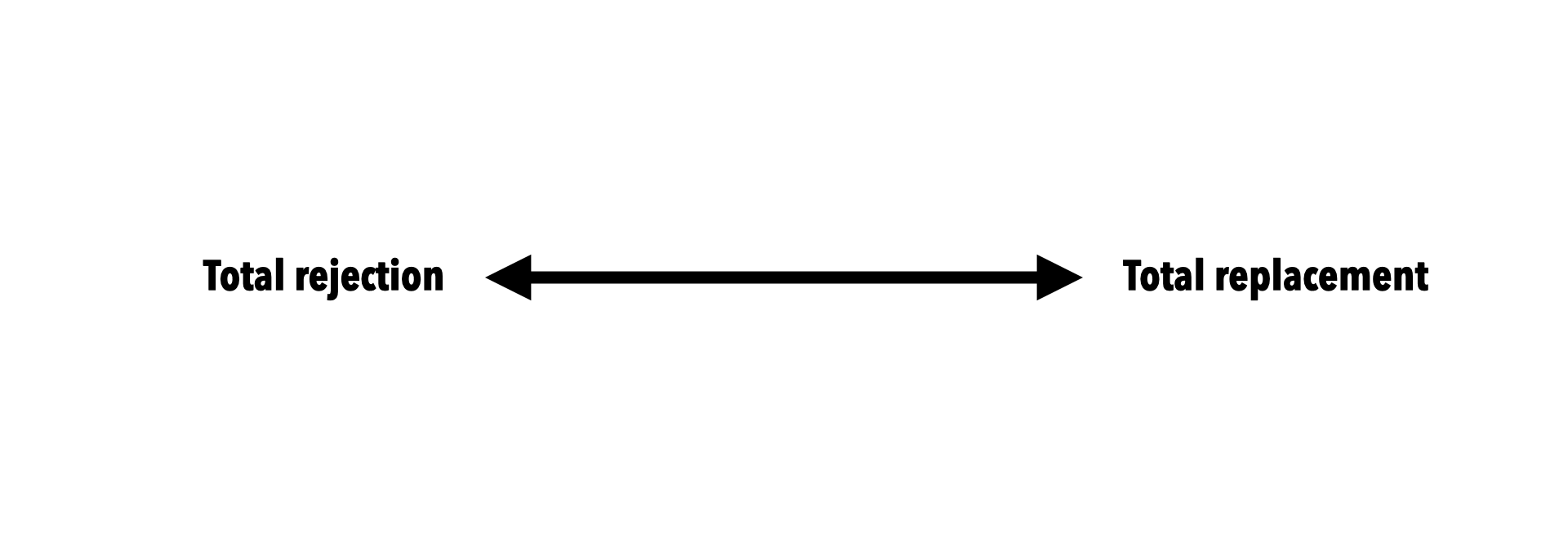

When thinking about your relationship with AI in general, it helps to consider a spectrum. On one end, you reject the technology completely: you don’t want it anywhere near your notes. On the other end, the AI completely replaces you.

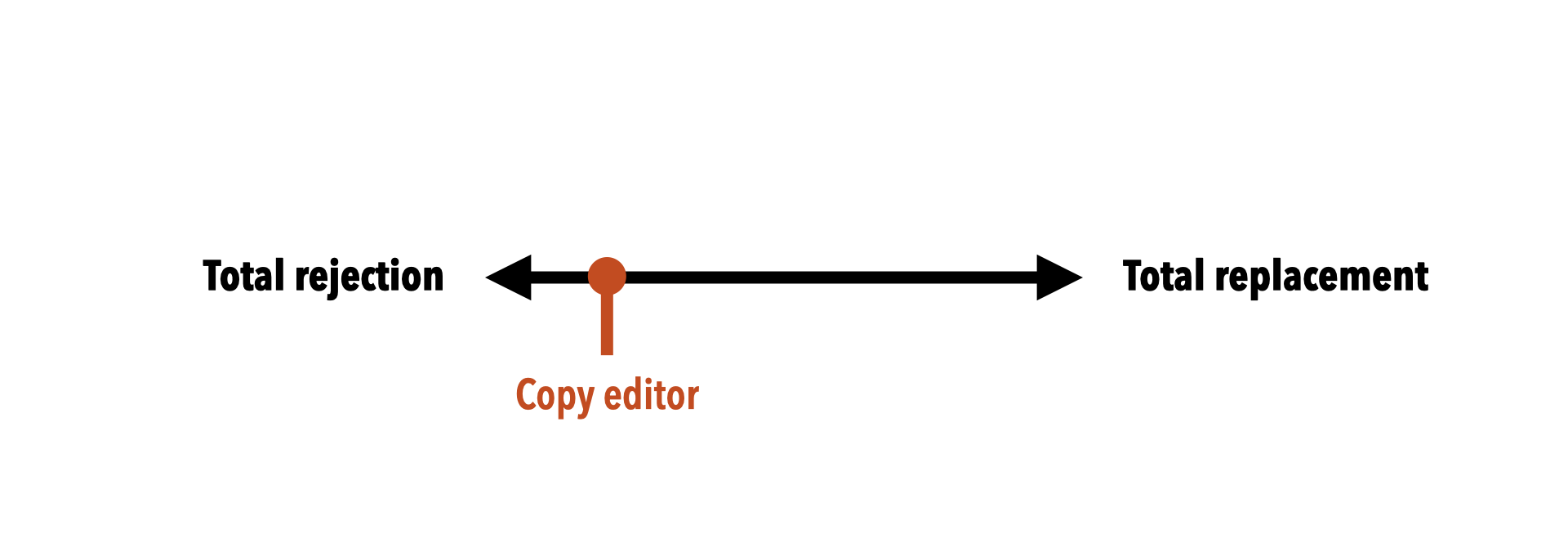

Neither extreme is desirable, so most approaches fall somewhere on the spectrum. Toward the “rejection” end, you use AI merely as a copy editor, correcting your spelling and grammar. Millions of people already use tools like Grammarly, and likely won’t object to using AI in this capacity.

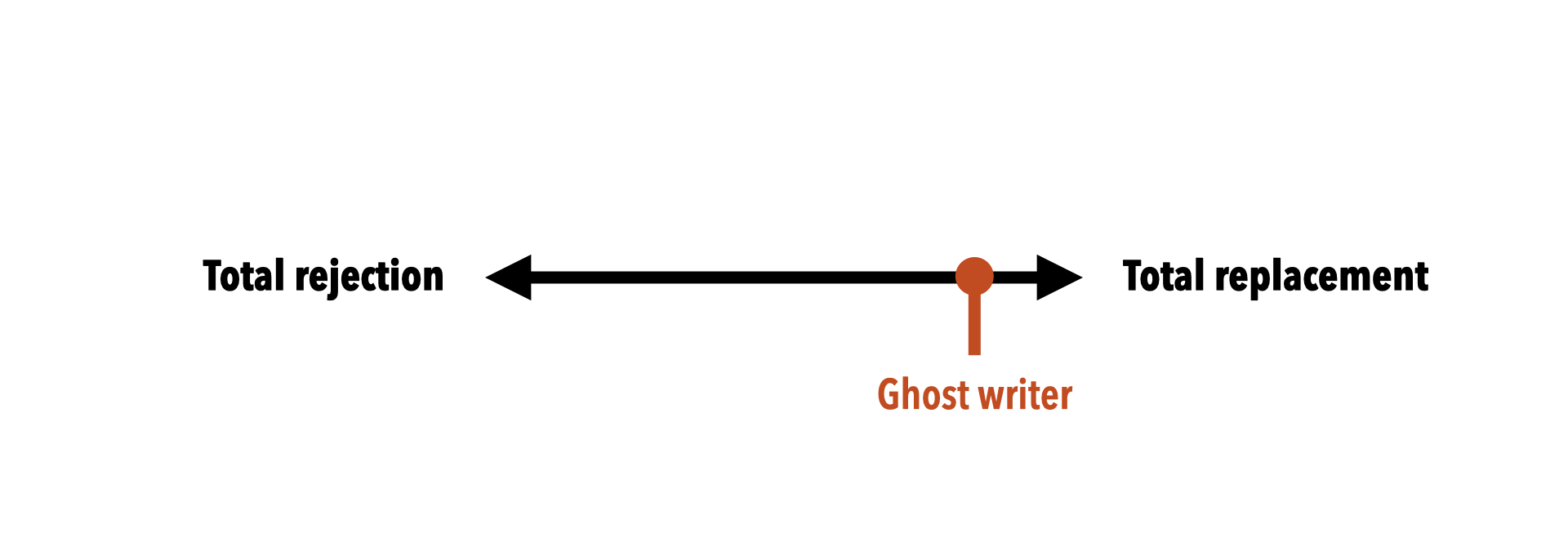

Toward the other end, you use AI to write for you. I call this role the ghost writer, although for many people it’s become a ghost thinker.

I get most value around the middle of the spectrum.

There’s a historical precedent here. Some early modern scholars employed live-in secretaries to do various tasks for them: researching, indexing, archiving, retrieving, organizing, translating, summarizing, and running errands. While not as famous as their employers, these people were often seen more as collaborators than anonymous servants. They were called amanuenses.

](/assets/images/2026/03/erasmus-cousin.jpg)

Erasmus of Rotterdam working alongside his amanuensis Gilbert Cousin, via Wikimedia

I consider amanuensis to be the ideal role for AIs in your knowledge garden.

Robots in the garden

This sounds nice in theory, but how does it work in practice? To find out, I undertook a major personal project last year. It’s something I’d always wanted to do: read through the humanities — the major texts that have shaped civilization: Homer to Faulkner, Plato to Freud, the Book of Job to the Communist Manifesto.

Daunting, right? Of course, it’s impossible to do comprehensively in a year. I followed Ted Gioia’s excellent syllabus, which curates key texts, artworks, and musical masterpieces into 52 weeks with reasonable limits. (As you’ll see below, I added cinema to the mix.)

There were two goals to the project. Primarily, I aimed to learn about the main ideas that have shaped our world. But this was also an opportunity to explore how AI might help with such an undertaking. I blogged what I learned every week, including how I used AI.

It was a messy process. That’s what you do in a garden! And the outcome wasn’t an enthusiastic endorsement of AI. Instead, I landed at a map of roles and modalities for how AI can help at different points in the spectrum. Let’s look at nine of these roles.

1. Tutor

The simplest role for AI is as a tutor. You ask it to explain a difficult concept, clarify a confusing passage, translate jargon, etc. I mostly did this via the standard chat UI (although I created a ChatGPT project to preserve context for the course.)

Example:

While reading Freud’s The Interpretation of Dreams, I came across three unfamiliar German terms: es, ich, and über-ich. ChatGPT helpfully explained these are more commonly known as id, ego, and superego — three terms I already understood.

Suggested prompt:

I just read [PASSAGE]. I understand [X] but I’m confused about [Y]. Can you explain [Y] in plain terms, without assuming I have background in [FIELD]?

2. Validator

Another basic role for AI is validating your understanding. To do this, you ask it to review your notes for errors or gaps, do basic fact checking, or critique your reasoning. Again, you can do this via the chat interface, but I also experimented with passing my notes in Obsidian using the Copilot plugin and in Emacs using gptel.

Example:

After reading The Epic of Gilgamesh, I wrote a note in Obsidian summarizing its plot. When I asked ChatGPT to critique my summary, it pointed out that I’d given the central character a redemption arc that isn’t present in the text. I’m so accustomed to the standard hero’s journey, that I projected it onto the book — and an LLM helped me correct this ‘hallucination.’

Suggested prompt:

Here are my notes on [WORK]. What important ideas did I miss or underemphasize? Don’t rewrite my notes — just flag the gaps.

3. Connector

Here’s yet another role you can easily do via chat: identifying thematic, philosophical, or narrative parallels between works. Note I wrote “works” — it’s fun and illuminating to ask for connections across media, genre, time, etc.

Example:

I watched Francis Ford Coppola’s The Conversation on the same week I read Oedipus Rex. For fun, I asked ChatGPT for possible parallels between the two works. Its reply was enlightening: it pointed out how the protagonists of both stories undertook an obsessive investigation that uncovered terrible knowledge.

Suggested prompt:

I’ve been reading [WORK A] and [WORK B]. What philosophical or thematic threads connect them? I’m looking for non-obvious resonances, not surface similarities.

4. Orienter

This role is something of an inversion of the validator. Instead of asking for feedback on your notes after reading a text, here you ask the AI for guidance before reading. You’re looking for framing, historical context, high level outlines, etc. — ideally, without spoilers.

Example:

Before reading Nietzsche’s Beyond Good and Evil and Tolstoy’s The Death of Ivan Illych, I uploaded both books to NotebookLM, which created a podcast for me that explained their thematic contexts. Listening to this podcast in my daily walk helped me better understand the readings.

Suggested prompt:

I’m about to read [WORK] for the first time. Give me enough context to make sense of it — historical background, key arguments, things to watch for — but don’t spoil the experience of discovering it myself.

5. Recommender

This is a useful role for deepening your understanding of a subject: asking for related works that reflect similar themes. It’s also a use case where I noticed considerable improvements in LLM performance over 2025.

Example:

Early in 2025, I read Confucius’s Analects. Perplexity was ahead in web-backed interactions at the time, so I asked it for a list of classic Chinese movies that reflected Confucian values. It responded with five suggestions, some of which it hallucinated. But one of them, Spring in a Small Town, was a bona fide classic — and I likely wouldn’t have learned of it without an LLM. (Later in the year, other chatbots gained this ability and hallucinations dropped across the board.)

Suggested prompt:

I just finished [WORK]. Recommend three films that explore similar themes or ideas. Prioritize films with strong critical reputations — I’d rather have one great recommendation than five mediocre ones.

6. Adversary

Here’s a fun role: asking for an LLM to push back on your position or steelman the opposing point of view. The idea is to expand your understanding by bringing your assumptions to the surface and challenging them.

Example:

After watching Modern Times, I asked ChatGPT to correct my understanding of the movie as a work of Marxist propaganda. The LLM convinced me that the film is in fact more of a humanist statement than a political one. As a result of this interaction, I changed my mind on Chaplin’s work.

Suggested prompt:

Here are my notes on [TOPIC]. Please help me see it through the lens of someone who might be sympathetic to [OPPOSING POSITION] without fully realizing it. What could I improve? Where is my argument weakest? [paste notes]

7. Analyst

This role will also help you appreciate a work from a different perspective. It’s easy: you ask for the LLM to apply a specific critical lens to a reading. Common lenses include Freudian, Marxist, feminist, Girardian, etc.

Example:

The same week I read Freud, my son and I watched Predator, the 1980s sci fi film starring Arnold Schwarzenegger. For fun, I asked ChatGPT to analyze the film through a Freudian lens. The result was both enlightening and hilarious.

Suggested prompt:

Apply a [Marxist / feminist / postcolonial / Jungian] reading to [WORK]. What does this lens reveal that a neutral summary would miss?

8. Mapper

This one’s a bit more esoteric. Some people — me included — are primarily visual: diagrams and drawings aid our understanding. Concept maps can be especially helpful. I’ve built an Agent Skill to allow LLMs like Claude draw concept maps. (Download it from Github.)

Example:

I used this mapping skill to generate a concept map of Virginia Woolf’s To the Lighthouse. It’s not especially insightful, but more of a proof point of using LLMs in a more visual modality.

Suggested prompt:

(Note: install my LLMapper Skill before issuing this prompt)

Generate a concept map for [WORK] centered on the question: “How does the novel’s treatment of [THEME] illuminate [BROADER QUESTION]?”

9. Reflector

This final role is different. Whereas the others took as the object of inquiry a particular work — e.g., a novel or a movie — this last one takes as the object your knowledge garden itself. That is, you point the LLM to a series of notes to analyze patterns over time and suggest improvements.

Example:

I fed all 52 weekly posts from my humanities crash course to Claude Code, and asked it to identify the various roles in which I used AI for learning throughout the year. Its answers — with some curation from me — are the roles you just read.

Suggested prompt:

Here are my notes from [X weeks/months] of reading on [TOPIC]. What patterns do you notice in what I pay attention to? What do I seem to find most interesting, and what do I seem to avoid or underweight?

Takeaways

This list isn’t comprehensive. I’m still experimenting and would love to learn from your experiments as well.

To wind down, I’ll summarize with three key points:

- Don’t try to build a brain. Instead, grow a garden. Metaphors matter. Stop thinking of your PKM system as a prosthetic mind. Instead, think of it as a place you can go to think.

- Use AI to help you think and learn better. AI can help you think better. But use it intentionally. Aim to land somewhere between “replacement” and “rejection.”

- Think calm… and long-term. Learning to think better is a lifelong project. Your knowledge garden is where it happens.

This isn’t a productivity hack. Results won’t come in a year. Results might not come after seven years. Thinking in terms of “results” might be wrong altogether.

You’re building a place to think. And not just any place: a living place that changes and grows over time. It’ll be messy. Good. But if you work on it, it’ll grow more beautiful and fruitful over time. You’ll get peace and satisfaction, even in its imperfection. And the sooner you start, the more material you’ll have for the AIs to help.

I’ll close with this passage from Montaigne, which I hope captures the spirit of what I’ve told you today:

When I dance, I dance; when I sleep, I sleep; yes, and when I walk alone in a beautiful orchard, if my thoughts have been concerned with extraneous incidents for some part of the time, for some other part I lead them back again to the walk, to the orchard, to the sweetness of this solitude, and to myself. Nature has in motherly fashion observed this principle, that the actions she has enjoined on us for our need should also give us pleasure; and she invites us to them not only through reason, but also through appetite. It is wrong to infringe her laws.